April 23, 2026: The day AI stopped chatting and started working

OpenAI's Workspace Agents mark a shift from isolated prompts to continuous autonomous work. Google air-gaps Gemini. Law firms face the death of the billable hour.

Today’s key AI stories

- OpenAI introduces Workspace Agents to handle complex workflows autonomously.

- Google and Cirrascale deploy air-gapped Gemini models that vanish when unplugged.

- Mozilla uses Anthropic's AI to patch 271 security flaws in Firefox.

- Law firms face the death of the billable hour as AI rewrites operations.

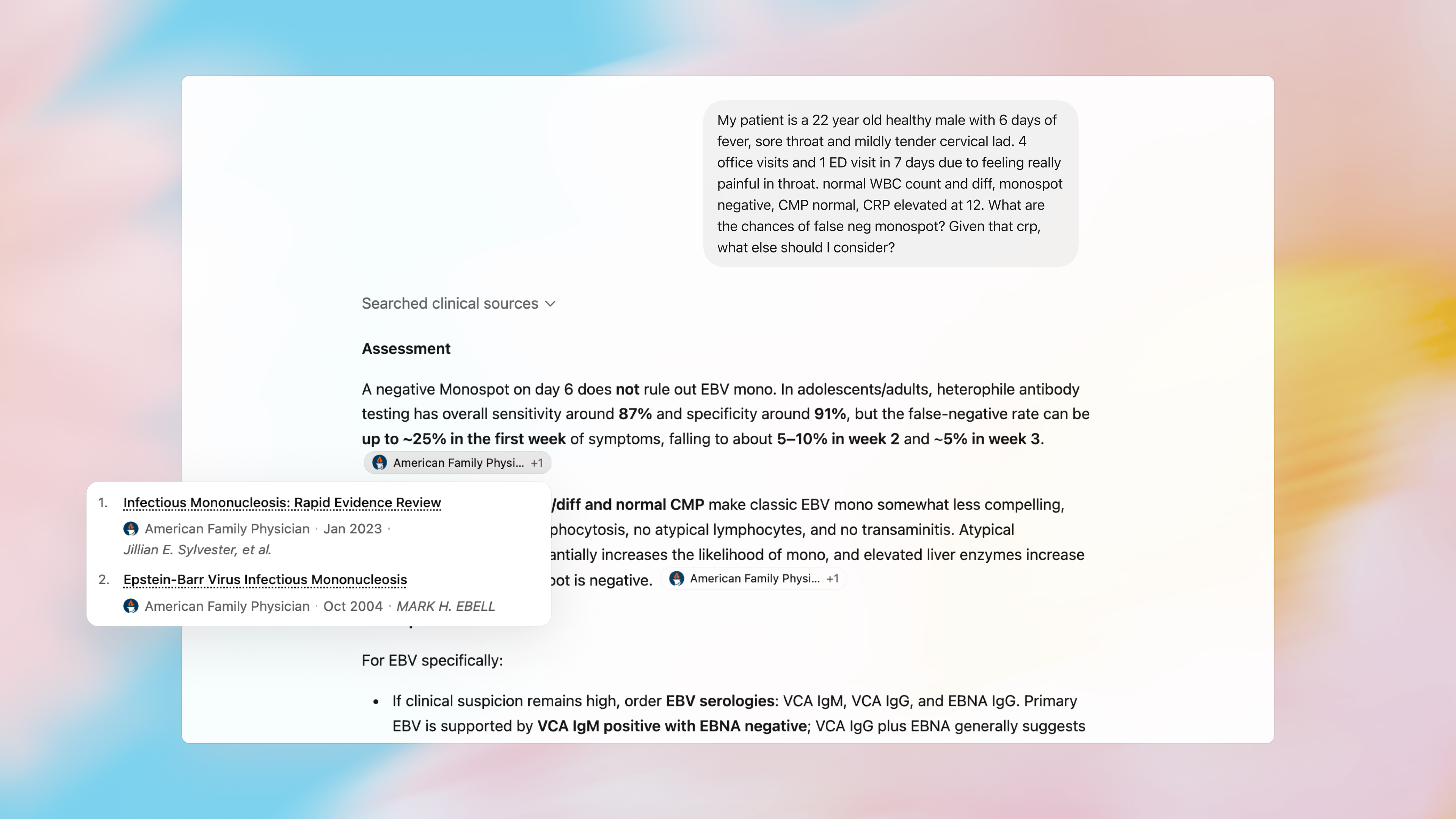

- ChatGPT for Clinicians becomes free for verified US healthcare workers.

- NVIDIA accelerates the infrastructure with Blackwell servers and universal sparse tensors.

- A massive data shift occurs as tech giants race for private hardware and agentic code.

The end of the prompt

Let us talk about how we work. For the past few years, we treated AI like a very smart intern. We gave it a prompt. It gave us an answer. We checked the work. We moved on. It was a simple transaction. It was an isolated event. That era is officially ending today. We are moving from single prompts to continuous operations.

OpenAI just announced Workspace Agents. This is not just another chatbot update. These are Codex-powered agents. They live in the cloud. They do not sleep. You can assign them a role. You might create a Software Reviewer. You might build a Third-Party Risk Manager. You build it once. Your whole team uses it. It runs long and complex workflows in the background.

This requires a massive change in engineering. OpenAI also revealed how they made this possible. They changed their Responses API. They introduced WebSockets. They built connection-scoped caching. Why does this matter? Because agents need to think fast. When an AI agent loops through a problem, it makes hundreds of small decisions. If the network is slow, the agent is useless. By using persistent connections, OpenAI cut latency by forty percent. The system now processes thousands of tokens per second. The friction is gone. The agent is now a worker.

The death of the billable hour

If the AI is now a worker, what happens to the human? Let us look at the legal industry. A new report today highlights a massive shift in law firms. For decades, lawyers sold their time. They used the billable hour. If a contract took ten hours to review, the client paid for ten hours. It was a simple formula.

But what happens when an AI agent reviews that contract in three seconds? The correlation between time and income is broken. Law firms are panicking. They bought AI licenses early on to look innovative. Now, they actually have to use them. They have to rewrite their workflows. They must adopt value pricing.

This is a painful transition. Firms must decide where the human review sits. They have to rethink their entire business model. If they try to keep billing by the hour while using AI, a competitor will destroy them. A new, leaner firm will offer the same result for a fraction of the cost. The clients will demand it. Technology always forces efficiency. The legal sector is just the first domino to fall.

Paranoia meets practicality

As AI takes over real work, a new problem emerges. Data security. Corporations are terrified. They do not want their sensitive data living in a public cloud. They want control. Today, Google answered that fear.

Google Cloud partnered with Cirrascale. They are putting the Gemini model inside a physical box. It is a Dell server packed with eight Nvidia GPUs. It is completely air-gapped. That means it has no connection to the outside internet. You can put it in your own basement. You can keep it totally isolated.

Here is the brilliant part. The Gemini model only lives in volatile memory. It is never saved to a hard drive. If someone tries to tamper with the machine, it shuts down. If you pull the plug, the AI vanishes. It is gone forever. This is military grade paranoia. And it is exactly what big business wants. They want the power of AI without the risk of a data breach. Hardware is becoming the new software.

The shifting economics of security

While Google locks AI in a box, others are using it to hunt. Cyber security has always been unfair. The attacker only needs to be right once. The defender has to be right every single time. Finding vulnerabilities is slow. It requires expensive human talent. But that math is changing.

Today we learned that Mozilla used Anthropic's Claude Mythos AI. They used it to scan Firefox. The AI found two hundred and seventy one security vulnerabilities. It found them quickly. It helped fix them. The cost of defense is finally dropping. AI automated vulnerability discovery is reversing the traditional advantage held by hackers. When you can deploy a swarm of AI agents to read millions of lines of code, you change the rules of the game.

Healing the system

This automation is not just for code. It is for humans. OpenAI made a quiet but massive move today. They made ChatGPT for Clinicians completely free. Any verified doctor, nurse practitioner, or pharmacist in the US can use it. It is not just a basic model. It runs on GPT-5.4. It outperformed human physicians in testing.

Why is it free? Because data is the ultimate currency. By giving this tool to doctors, OpenAI embeds itself into the healthcare system. The AI handles referral letters. It handles prior authorizations. It reads medical journals. It takes away the paperwork that causes doctor burnout. When an AI proves it is safe and accurate ninety nine percent of the time, resistance fades. Healthcare is a complex maze. AI is becoming the map.

The silent engine underneath

None of this happens by magic. It requires immense physical power. It requires brutal computation. NVIDIA continues to pave the roads for this new world. Today they showed off their RTX PRO 4500 Blackwell Server Edition. They packed it with high speed memory. They updated their virtual GPU software.

They are also changing the math itself. NVIDIA integrated the Universal Sparse Tensor into their python math libraries. This sounds incredibly dry. It sounds like pure engineering. But it matters deeply. It allows developers to skip heavy memory loads. It speeds up calculations by over four hundred times. They also pushed new optimizers into their Megatron training software. They are making it cheaper and faster to train massive models like Kimi and Qwen. The engine is getting smaller, but the horsepower is doubling.

The messy reality of transition

We must also look at the shadows. The transition to an automated world is rarely smooth. MIT Technology Review published a stark list of realities today. Meta is reportedly installing tracking software to watch their workers' clicks. They want the data to train their AI. Employees are naturally angry. The line between worker and training data is blurring.

We also see signs of pure chaos. An unauthorized group reportedly accessed Anthropic's restricted Mythos model. The model was deemed too dangerous for public release. Yet, it leaked. We see SpaceX preparing to buy an AI coding startup for sixty billion dollars. The sheer scale of capital is staggering. The stakes are getting incredibly high. Governments are noticing. China is actively stopping AI talent from leaving the country. The FBI is investigating the deaths of sensitive researchers. The technology is no longer just a product. It is a matter of national security.

What it means

We are crossing a very specific threshold today. The experimental phase is over. We are entering the operational phase. You can see it in the language we use. We are no longer talking about parameters. We are talking about workflows. We are talking about agents, air gaps, and billable hours.

This shift requires a new mindset. You can no longer just type a clever prompt. You have to design a system. We see developers turning to structured coding environments. They are using Claude Code Skills to build repeatable workflows. They are using causal inference to prove their data is right. As one data scientist wrote today, we must combat the culture of sloppy prompting. We need the scientific method. We need clear hypotheses.

The AI is ready to work. It will sit on an unplugged server in a basement. It will scan code for bugs. It will write medical referrals. It will argue legal contracts. The question is no longer what the AI can do. The question is how fast you can rebuild your business around it. The old models are breaking. The companies that survive will be the ones that let the agents run.