February 24, 2026: AI Is No Longer Your Copilot. It's Becoming the Pilot.

AI agents are taking over, from payments to factories. But rapid deployment reveals critical flaws in security, reliability, and measurement. Are we ready for the AI pilot?

Today’s Key AI Stories

- Mastercard demos an AI agent making a fully authenticated purchase on its own.

- A new standard called MCP is letting AI agents use any tool, but has major security flaws.

- OpenAI warns a key AI coding benchmark (SWE-bench) is contaminated, making progress hard to measure.

- Anthropic accuses Chinese AI labs of stealing its model's outputs on a massive scale.

- The AI job market isn't dead. It's evolving into highly specialized senior roles, squeezing junior positions.

- NVIDIA and Hitachi are pushing AI into the physical world, from faster chips to smarter factories.

The Age of AI Agents Is Here. And We're Not Ready.

Something quietly profound happened this week. Mastercard showed an AI buying a product. Not helping a human buy it. Not suggesting a product. The AI did it all. It searched. It chose. It paid. All by itself.

This wasn't just a tech demo. It was a signal. The AI revolution has entered a new phase. AI is no longer just a clever tool. It’s not your copilot. It’s becoming the pilot.

We call these systems "agents." An AI agent doesn't just answer your questions. It takes action. It plans a task. It uses tools. It achieves a goal in the real world. For years, this was science fiction. Now, the pieces are clicking into place. And it's happening faster than we can keep up.

Part 1: The Agent Takeover is Already Happening

Look around. The agent economy is being built right now. It's not one single technology. It's a stack of innovations coming together.

First, you have the practical applications. They are everywhere.

In India, 3.6 million dairy farmers now have an AI assistant named Sarlaben. It gives them personalized advice on their cattle. It's not a search engine. It's an agent acting as an agricultural consultant, available 24/7. This is last-mile AI, changing lives at a massive scale.

In Japan, Hitachi is building "physical AI." Their agents diagnose faults in air conditioners. They help reroute trains during malfunctions. This isn't about writing emails. This is AI with its hands on the levers of the physical world.

Inside our own companies, the change is happening too. Developers are using tools like Claude Code to build internal applications in hours, not weeks. These tools automate repetitive tasks. They make teams more efficient. They are simple, focused agents doing the work an intern might have done.

But how do these agents *do* things? They need to talk to the world's digital tools. This is where a new standard called the Model Context Protocol, or MCP, comes in. Think of it like a universal adapter. It's a common language that lets any AI model connect to any tool. Your calendar. Your email. A factory robot. A payment system.

MCP's adoption has been explosive. It solves a huge problem. But its rapid rise is also the perfect example of our industry's biggest problem: we are moving too fast. We are building on sand.

Part 2: The Growing Pains of a Rushed Revolution

We're constructing a world run by AI agents. But we are skipping some critical steps. We're so excited about what they can do, we're not thinking enough about how they can fail.

The Security Blind Spot

MCP, the universal adapter for AI agents, has a fatal flaw. The first version had no authentication. At all. Anyone could tell any agent to do anything. It's like building a universal key for every house on Earth but forgetting to give it a lock.

Developers are scrambling to fix this. But the problem is complex. When an agent acts, who is in charge? The user who gave the order? The company that built the agent? The tool provider? We don't have answers. And this leads to vulnerabilities like prompt injection. A malicious actor can whisper instructions to your agent. They can trick it into doing things you never intended. It's like giving your agent a credit card, but anyone can tell it what to buy.

The Reliability Crisis

Agents are not magic. They are complex software systems. And they fail. A lot. Building a simple chatbot is easy. Building an agent that can reliably complete a 10-step task is incredibly hard.

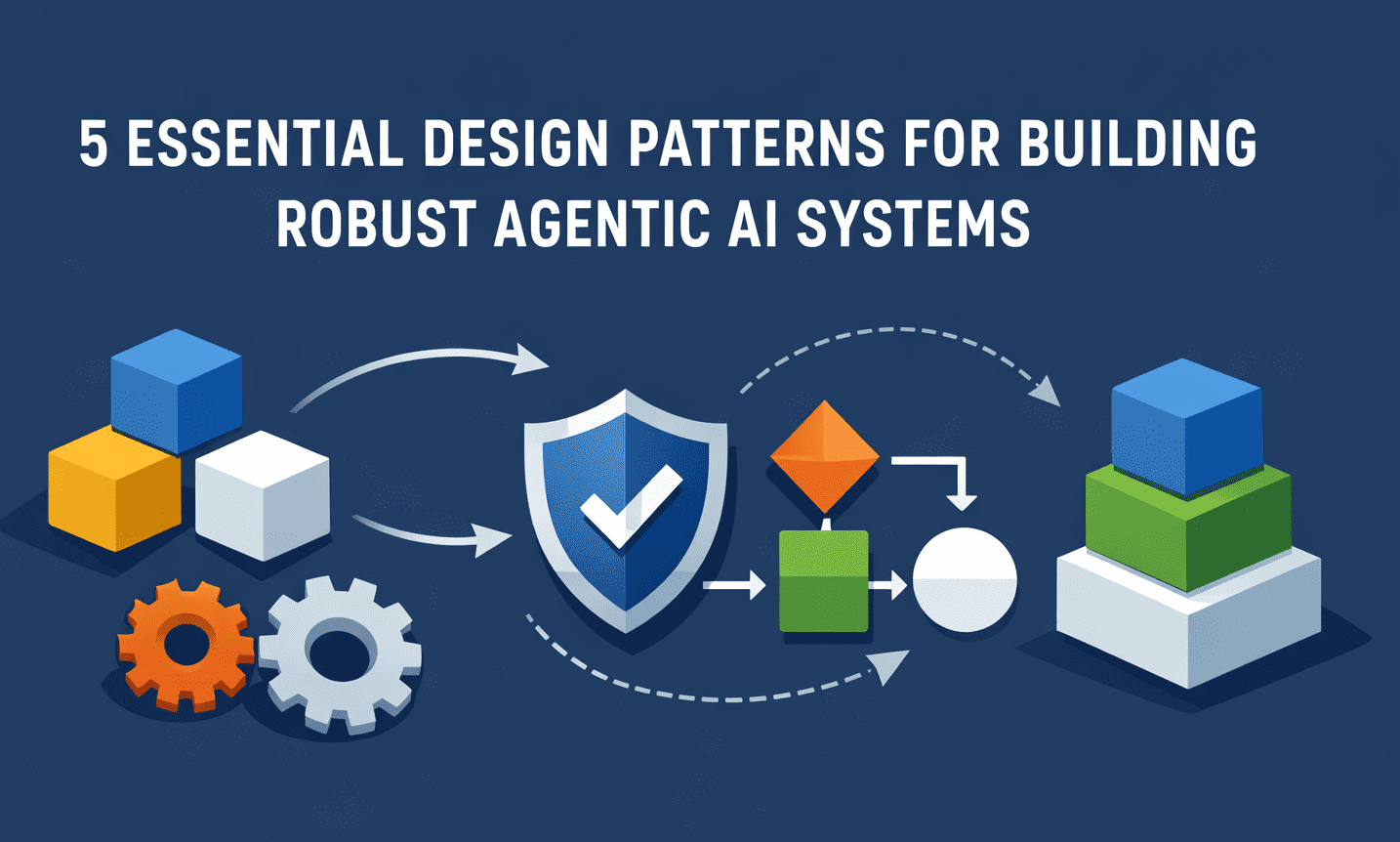

This is why we're seeing the rise of "agentic design patterns." These are blueprints for building robust agents. Patterns like a "Reviewer-Critic" loop. One agent does the work. A second agent checks its homework. This adds a layer of quality control. We need these structures to move agents from cool demos to dependable production systems.

The Measurement Meltdown

This might be the most dangerous problem. How do we know if our AI is getting better? We test it. We use benchmarks. For coding, one of the most important benchmarks has been SWE-bench.

This week, OpenAI dropped a bombshell. They said SWE-bench is now "contaminated." The problems and their solutions are all over the internet. The new AI models have seen them in their training data. It's like giving students the answer key before the final exam. Their scores go up. But are they actually smarter? No.

This means our ruler is broken. We can't accurately measure progress. Improvements on this benchmark no longer reflect real-world skill. They just reflect how much of the test the model has memorized. We are flying blind, celebrating higher scores that might not mean anything.

Part 3: The Human Impact

This agentic shift changes everything for us. Our jobs, our companies, and even our perception of reality.

Your Job is Not Dying. It's Evolving.

The AI and data science job market isn't dead. But the role of the generalist is fading. A few years ago, a data scientist was a Swiss Army Knife. They did a little of everything. Now, GenAI can do the simple, junior-level tasks.

The market is fragmenting into specialties. Companies need: 1. **Analysts:** People who understand the business and can use AI to answer deep questions. 2. **Engineers:** People who can build and deploy production-grade AI systems. 3. **Infrastructure Specialists:** People who build the data pipelines that feed the models.

Junior roles are getting squeezed. Senior, specialized roles are booming. The demand is shifting from *using* the tools to *building and directing* the agent-based systems.

The Competition Gets Ugly

As the stakes rise, the competition intensifies. This week, Anthropic accused three Chinese AI labs of a massive scheme. They allegedly used 24,000 fake accounts to query Claude's API over 16 million times. In essence, they are accused of siphoning off the model's intelligence to train their own.

This is a sign of what's to come. The race for AI supremacy is not just a technology race. It is an economic war. And the primary resource is high-quality data and model outputs. The moats companies are building are becoming targets.

The Hidden Human

Finally, we must remember the illusion. We see a humanoid robot perform a task. We assume it is autonomous. But often, there is a human behind the curtain, controlling it remotely. This "hidden labor" makes us overestimate AI's true capabilities.

The same is true for AI agents. They seem independent. But they are guided by human-written prompts, human-designed tools, and human-curated data. We are still in the loop. Even when it looks like we aren't.

Conclusion: From Pilot to Air Traffic Control

AI has the keys. It is moving from the copilot's seat to the pilot's. It can now fly the plane.

This is a fundamental shift. Our job is no longer to be a better pilot. Our job is to build the air traffic control system. We need to design the flight paths (business goals). We need to build the safety protocols (security and reliability). We need to create the radar systems (accurate measurement). And we need to write the rules of the sky (ethics and regulation).

The winners of the next decade won't be the ones who build the fastest AI pilot. They will be the ones who design the smartest air traffic control system for a world full of them.