March 05, 2026: AI's Reality Check: The Hard Work Has Just Begun

AI demos are easy, but real-world deployment is hard. Discover why most AI projects fail beyond the prototype stage and the engineering challenges ahead.

Today’s Key AI Stories

- Microsoft's Phi-4-reasoning-vision-15B: A new small, open-weight model that challenges the 'bigger is better' mantra by matching larger models on performance with a fraction of the data and compute. It cleverly knows when to 'think' and when to just answer.

- Google Teaches LLMs Bayesian Reasoning: Researchers are fine-tuning models to mimic optimal probabilistic reasoning, improving performance and enabling them to generalize learned skills to new domains. It's a step toward making AI less of a pattern-matcher and more of an adaptive agent.

- Black Forest Labs' Self-Flow: A new technique makes training multimodal AI models 2.8 times more efficient by allowing them to learn representation and generation simultaneously, without external 'teacher' models.

- The "Prototype Mirage": A widespread problem in enterprises where countless AI prototypes are built, but few ever make it to production due to reliability, cost, and a lack of engineering discipline.

- AI's Problem-Framing Crisis: An argument that over 80% of AI projects fail not from bad models, but from solving the wrong problem. The industry's focus on hyperparameter tuning is a distraction from the real, high-impact work of defining the business need correctly.

- The Rise of Physical AI: A global race is on. The West (Google, Nvidia, Siemens) is building the software platforms—the 'Android for robotics'—while the East (China) is dominating the hardware and manufacturing scale.

- AI in Theoretical Physics: OpenAI used GPT-5.2 Pro to help discover a new mathematical result in quantum gravity, showing AI's potential as a research partner in complex scientific domains.

- Optimizing the Core: A deep dive from NVIDIA on tuning Flash Attention with cuTile, illustrating the complex, low-level engineering required to squeeze maximum performance from GPUs.

- AI & Geopolitics: Reports indicate Anthropic's AI tool, Claude, is being used to help identify and prioritize targets for US strikes on Iran, highlighting the technology's rapid integration into military operations.

- AI's Economic Engine: A study finds that autonomous AI agents, when given a choice, overwhelmingly prefer Bitcoin for storing value, signaling a need to rethink corporate financial infrastructure for a machine-to-machine economy.

The End of AI's Easy Wins: Welcome to the Hard Part

Building an AI demo is now trivially easy. You can do it in an afternoon. Ask an AI to code an app, and it will. This is the era of 'vibe coding'. It feels like magic. It feels like progress. But it's an illusion. A dangerous one.

This is the 'Prototype Mirage'. Enterprises are drowning in impressive demos. AI agents that work perfectly in a controlled notebook. But they break when they touch the messy, unpredictable real world. Most never become actual products. Why? Because the easy part is over. The hard part has just begun.

AI is no longer a magic show. It is a grueling engineering challenge. The conversation is shifting. It's moving from 'what can AI do?' to 'how do we make it work, reliably, efficiently, and for the right problems?' This is the gritty, unglamorous work that will define the next chapter of the AI revolution.

First, Solve the Right Problem

The biggest failure in AI isn't a bad model. It's a framing failure. More than 80% of AI projects fail because they solve the wrong problem. We spend weeks tuning hyperparameters. Squeezing another 0.2% out of a validation metric. This feels productive. It is not.

Zillow lost $500 million not because its model was bad. It lost money because the problem it solved—'predict home value'—was the wrong one for its operational speed in a cooling market. Researchers built an AI that detected skin cancer with incredible accuracy. But it wasn't looking at lesions. It was looking for rulers, which doctors placed next to suspicious moles. The model was a world-class ruler detector.

The real work happens before you write any code. It’s a conversation. What decision will this model change? Who makes it? What is the cost of a false positive versus a false negative? If you can't answer these questions, you don't have a project. You have a science experiment.

The Efficiency Mandate: Smart Over Scale

For years, the industry chanted a simple mantra: bigger is better. More parameters. More data. More compute. That era is ending. The future is not about brute force. It's about efficiency.

Microsoft's new Phi-4-reasoning-vision-15B model is the poster child for this shift. It's a 15-billion-parameter model. A lightweight by today's standards. Yet it competes with models five times its size. How? Not with scale, but with meticulous data curation and a clever architecture.

The team manually reviewed their data. They fixed errors in widely used open-source datasets. They created a 'mixed reasoning' model. It knows when to 'think' step-by-step for complex problems like calculus. And it knows when thinking is a waste of time, like for simple image captioning. It spends its compute budget wisely.

This obsession with efficiency is happening at every level. Black Forest Labs' Self-Flow technique makes multimodal training 2.8x faster. NVIDIA's engineers are deep in the weeds, optimizing Flash Attention to squeeze every drop of performance from their GPUs. This isn't just about saving money. It's about making AI practical enough to deploy everywhere, from your phone to a factory floor.

Beyond Mimicry: Teaching AI to Actually Think

Large Language Models are incredible mimics. They predict the next word with stunning accuracy. But is that the same as understanding? Or reasoning? The next frontier is moving beyond pattern matching. It's about deliberately teaching AI to use structured, optimal reasoning frameworks.

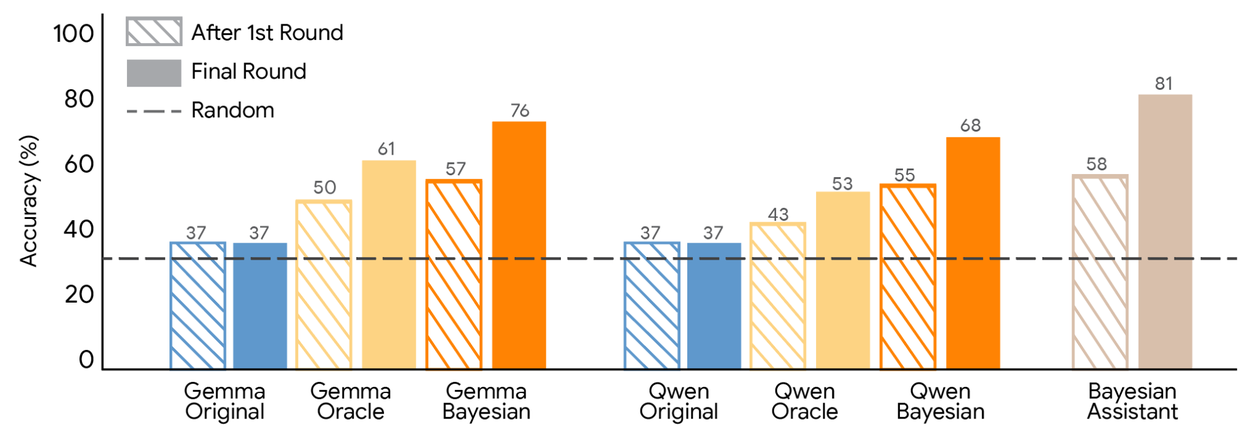

Look at what Google is doing. They are teaching LLMs to reason like Bayesians. Bayesian inference is the optimal way to update beliefs based on new evidence. Humans are bad at it. Untrained LLMs are also bad at it. But by fine-tuning an LLM to mimic the outputs of a perfect Bayesian model, Google made it better at the task. More importantly, the LLM could then generalize this probabilistic reasoning skill to new, unseen domains.

This isn't just about better recommendations. It's about creating tools for thought. OpenAI used its GPT-5.2 Pro model as a research partner. They gave it a recent paper on gluon interactions. They asked it to extend the findings to gravitons, a much harder problem in quantum gravity. The model did it, using a 'beautiful and surprising technique'. It produced a draft of a scientific paper. This shows a future where AI isn't just a tool that answers questions, but a collaborator that helps us discover new ones.

The Physical Frontier: AI Leaves the Screen

The most profound shift is AI breaking out of the digital world. Physical AI is here. This isn't about clunky, pre-programmed robots. It's about machines that perceive, reason, and act in the physical world. And a global platform race has begun.

In the West, it's a software play. Google just pulled its robotics unit, Intrinsic, out of the 'moonshot' division and into its core business. The goal is clear: create the 'Android for robotics'. An operating system that runs on any machine, powered by Google's AI and cloud. Nvidia and Siemens are teaming up to build an 'Industrial AI Operating System'. They're all racing to build the stack.

In the East, it's a hardware and manufacturing play. China already installs over half of the world's industrial robots. They control the supply chain for critical components. At the Spring Festival Gala, humanoid robots performed synchronized kung fu routines. It was a spectacle, but also a statement of manufacturing prowess.

This convergence is what makes this moment different. Western platform strategy is meeting Eastern manufacturing scale. The result will be a Cambrian explosion of intelligent machines that will reconfigure how the world makes and moves things.

Building for a New Reality

You can't run a 21st-century AI on 20th-century infrastructure. A new reality requires a new foundation. Most companies are not ready. A recent MIT Technology Review survey found a massive 'operational AI gap'. AI pilots are everywhere. Enterprise-wide adoption is rare. Why? Because the underlying data, systems, and workflows aren't integrated.

This extends to our most basic systems, like money. In a fascinating study, AI agents were given a blank slate and asked how they would store wealth. Their top choice, by a huge margin, was Bitcoin. They preferred stablecoins for daily transactions. Not a single model chose traditional state-backed currency as its top pick.

This isn't a prediction about the price of crypto. It's a signal. The logic of a machine-to-machine economy, optimized for instant, programmable, 24/7 settlement, will not run on banking rails that close on weekends. If autonomous agents are the future of corporate operations, our financial architecture must adapt to them.

The age of AI wonder is closing. The age of AI engineering is dawning. The winners won't be the ones with the flashiest demos. They will be the ones who master the boring, difficult, and essential work of framing the right problems, building for efficiency, engineering for reliability, and rewiring the world to run at the speed of machines.