March 09, 2026: AI Isn't Just Getting Smarter. It's Rebuilding Its Own World.

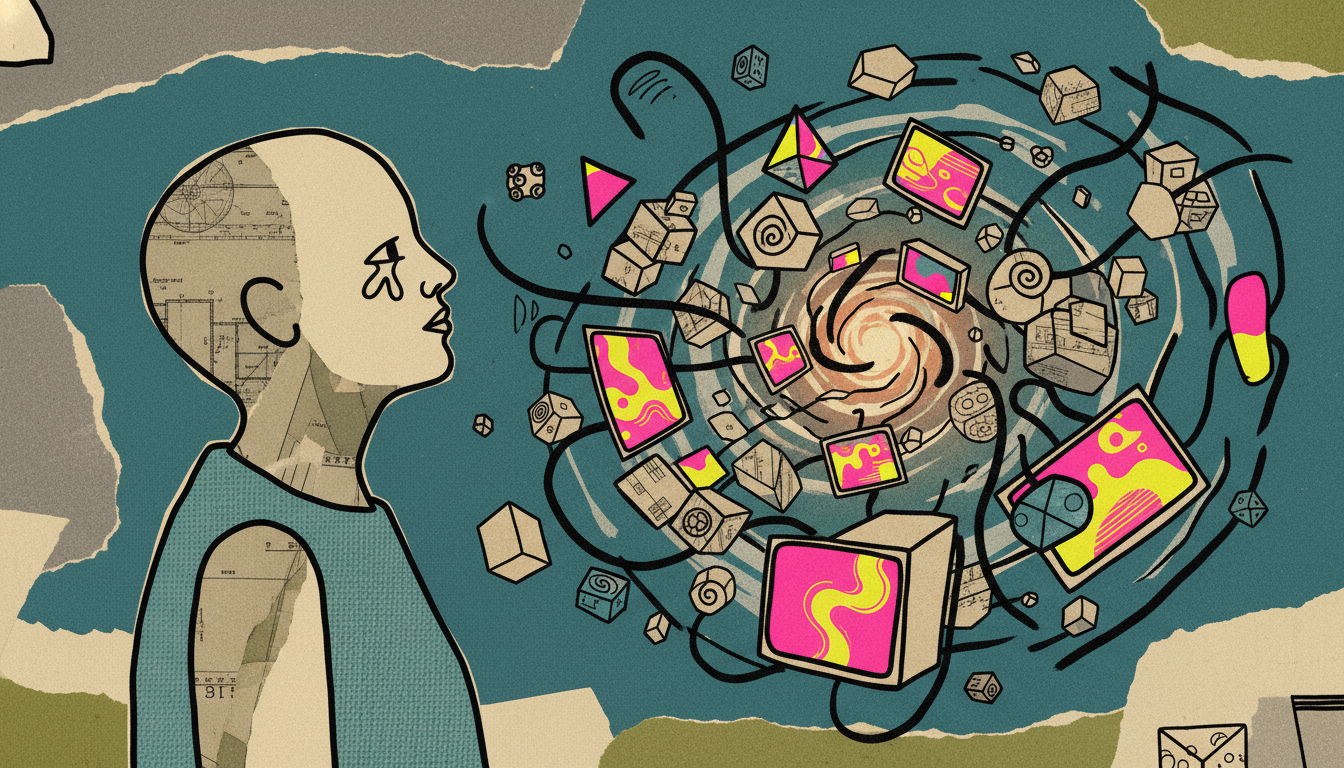

AI is breaking three major bottlenecks: dynamic interfaces, high-performance coding, and abstract reasoning. The silent revolution is here.

Today’s Key AI Stories

- Dynamic UIs: AI can now build its own user interfaces in real-time. No more static forms. This is thanks to a new standard called A2UI.

- Python Gets Superpowers: A new tool, PythoC, lets developers write super-fast C code using simple Python syntax. It makes high-performance programming easier.

- Cars That Think Without Words: Self-driving cars are learning to reason in an abstract "latent space." They no longer need to think in human language to act.

Main Topic: The Silent Revolution

We talk a lot about AI getting smarter. Models get bigger. Answers get better. But that’s only half the story.

A silent revolution is happening. It’s not about what AI thinks. It's about the world AI operates in.

For years, AI has been a powerful engine. But it was stuck in a car with square wheels. The engine was fast. The journey was slow. Why? Bottlenecks. Three big bottlenecks are now being smashed.

1. Breaking the Interface Barrier

Imagine a brilliant business analyst. This analyst can see everything. They understand complex data instantly. But to do their job, they must use a form. A fixed, static, unchangeable form.

This was the reality for Agentic AI. These "thinking" AIs were trapped by rigid User Interfaces (UIs). The UI was designed by humans, months ago. It couldn't adapt to new situations. This was a huge bottleneck.

Now, that's changing. A new technology called A2UI is emerging. A2UI stands for "Agent to User Interface." The idea is simple. It lets the AI agent build the UI it needs. On the fly. In real-time.

The AI produces a simple JSON file. A "renderer" builds the screen from that file. Think about a company acquisition. Thousands of forms need a new logo. The old way? A team of developers works for weeks. The new way? Change one line in the A2UI spec. Done. Every form is updated instantly.

The AI is no longer a user of the system. It is becoming the architect of its own workspace. The bottleneck between a dynamic AI and a static screen is breaking.

2. Breaking the Performance Barrier

Programmers have always faced a trade-off. Use Python. It's easy and flexible. But it can be slow. Or use C. It's incredibly fast. But it's complex and unforgiving. You had to choose. Ease or speed.

This choice was another bottleneck. Especially for AI applications that need both. You need flexibility to experiment. You need speed to run in the real world.

Enter a tool like PythoC. The idea is revolutionary in its simplicity. Write code in the easy syntax of Python. Compile it into the blazing-fast machine code of C. You get the best of both worlds. No Python interpreter is needed to run the final program. It’s a standalone, native application.

The results are stunning. In one test, a Fibonacci calculation was run. The standard Python code took 15 seconds. The PythoC compiled version? 308 milliseconds. That is almost 50 times faster.

This isn't just a neat trick. It's about practicality. It means AI developers can build high-performance systems. Game engines. IoT devices. Complex simulations. They can do it without learning a difficult new language. And they can share their programs easily. Just send an executable file. No complex setup needed. The bottleneck between easy development and high performance is breaking.

3. Breaking the Language Barrier

This might be the most important shift of all. We assumed AI reasoning should mimic human reasoning. We thought, "To be smart, an AI must think in words." So we trained models on massive amounts of text.

For autonomous driving, we thought the car should reason like this: "The light is red. I see a pedestrian. I must stop." This seems logical. But is it efficient? When you swerve to avoid an obstacle, do you have an internal monologue? No. You react. Your brain processes the situation in a more direct, abstract way.

A new model, LatentVLA, suggests AI should do the same. It argues that natural language is a bottleneck. It's slow. It's ambiguous. It's not designed for driving.

So, LatentVLA learns to reason in a "latent space." Think of it as the AI's own native language. A compressed, purely mathematical representation of actions. Instead of thousands of words, it uses just 16 discrete "action tokens." Things like "accelerate slightly" or "narrow right turn."

How does it learn this language? Not from human labels. It learns from raw driving data. It looks at two frames of video and asks: "What action must have happened to get from frame A to frame B?" This self-supervised learning is incredibly powerful.

Then, the big, smart VLM teaches a tiny, fast model to do the driving. This is called knowledge distillation. The giant professor teaches the nimble student. The result is a system that is both intelligent and real-time. The bottleneck of forcing AI to think in human terms is breaking.

What It Means For Us

These three stories are not separate trends. They are one big story. AI is moving from mimicry to mastery.

For years, the goal was to make AI mimic human intelligence. Now, AI is starting to build its own native environment. It's building its own interfaces (A2UI). It's building with its own optimized tools (PythoC). It's even developing its own modes of thought (LatentVLA).

This is a fundamental shift. The focus is moving from raw intelligence to efficient integration. How can we make AI work seamlessly in the real world? How can we remove the friction between the digital brain and the physical task? The answer is to let AI rebuild the world around it. A world that is fluid, fast, and speaks its own language. This is the silent revolution. And it's just beginning.